By

OpenAI has started rolling out conventional ads in ChatGPT. It won’t stop there.

The inevitable has arrived. Ads have begun popping up on ChatGPT—even, reportedly, in initial responses to user queries, rather than after extended conversations—and some fans aren’t pleased. “RIP ChatGPT,” wrote one Reddit commenter. “It was fun while it lasted! 💔” The ads, which are being rolled out to free users and those who pay for the lowest-tier subscription ($8 per month), are rather familiar and banal in their presentation: a “sponsored” box pitching a product that ChatGPT’s algorithm thinks is relevant to the conversation, much as you’re used to seeing on social media platforms like Facebook and X.

An enduring feature of advertising is that it is “geographically imperialistic”: The best place to put an ad is where one doesn’t exist already. But the best type of ad to place is one that is unrecognizable as an ad. These truths should be kept in mind amid the rollout of ads on ChatGPT. Rest assured, this is just the beginning of how OpenAI, the creator of ChatGPT, will monetize its users. The company will undoubtedly graduate to more sophisticated ads, at which point the only question will be whether users even realize when they’re being monetized.

Artificial intelligence is an unfathomably expensive product to give away for free, yet that’s been OpenAI’s main strategy to achieve adoption. So it’s little wonder that the company is in dire financial straits, facing tens of billions of dollars in projected annual losses. How else to close that deficit save for digital billboards? The geographic expanse for commercial colonization—a reported 800 million weekly active users—was simply too vast for OpenAI to forgo.

So ChatGPT’s users are right to bummed. Commercials clutter both the aesthetic and impetus of the online space. And the annoyance isn’t merely a pop-up to be blocked or a pre-roll to be skipped: Ads can’t help but corrupt the purpose of the content that they surround. But even OpenAI’s CEO, Sam Altman, has admitted that ad monetization is a real downer. “I think that ads plus AI is sort of uniquely unsettling to me,” Altman said in 2024. “When I think of GPT writing me a response, if I had to go figure out, Exactly how much was who paying here to influence what I’m being shown? I don’t think I would like that.” But he also, notably, did not rule out ads on ChatGPT in the future.

As the old adage goes: If you’re not paying for the product, then you are the product. For two centuries, the mass and social media industries depended on this bargain. Nascent newspapers of the “penny press” era could be sold below cost because advertisers subsidized the access to audiences. Likewise, today, no one pays for Google search or Instagram or TikTok.

AI represents a qualitatively different revelation. It renders all the knowledge of the internet conversationally interactive. It outsources our critical thinking skills and regresses our decision-making to the mean. It’s been designed to seem human to secure our trust. It seduces our affections and indulges our delusions, often sycophantically so. It subs in for our therapists and friends alike and helps us raise our children.

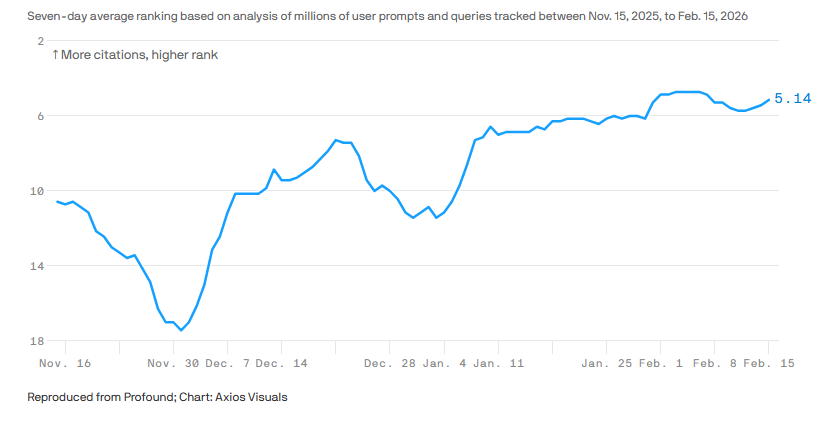

The consumer insights from that level of intellectual, emotional, and social intimacy exceed an advertiser’s wildest dreams. Fortuitously so: AI arrives at a confusing, anxious time on Madison Avenue. Google’s AI summaries are disintegrating the web as we know it, hastening a “zero-click” future, in which users have no need to avail themselves of the links below on the page. Hence, a shift from search engine optimization to “answer” or “generative” engine optimization: strategizing how brands and products appear, organically, in large language model inputs and outputs.

ChatGPT makes that roundabout sell a much straighter line—for a price. And it is reportedly a steep one—with ad rates nearing those of NFL games. Large language models might be a black box—in terms of why they do what they do—but that ad pricing suggests OpenAI knows exactly what a gold mine of personal data it is excavating daily.

That’s why we ought to treat OpenAI’s claims about its advertising with the same skepticism applied to the advertising itself. Sure, the company says it will insulate the ads as ostensibly independent from content. “Ads do not influence the answers ChatGPT gives you. Answers are optimized based on what’s most helpful to you. Ads are always separate and clearly labeled,” the company insists. “We keep your conversations with ChatGPT private from advertisers, and we never sell your data to advertisers.” But that leaves a lot of marketing money on the table—and from the outside, it sure looks like OpenAI needs that money to stay afloat.

Hence, the Super Bowl ad diss from OpenAI competitor Anthropic, the maker of Claude, whose commercial mocked the sponsored content that will inevitably intrude and inundate ChatGPT feeds. But mount that high horse at your peril, Anthropic. Unless there’s a clever way to pay for all those server farms and microchips, all other AI platforms will probably have to follow suit. (And if the Pentagon cuts ties with Anthropic, as it’s threatening to do, that day may come even sooner.)

The history of social media foretells it: Platforms and their creators, once unspoiled by corporate backers, now pitch us relentlessly—and in increasingly devious ways. “Native” ads on Instagram and TikTok often look indistinguishable within the content, forming the basis of the $30 billion influencer industry. But the notion of placing an energy drink in the background of an influencer’s video will soon seem laughably conspicuous. By that point, the problem for ChatGPT users will no longer be that they notice and get annoyed with ads. The problem—and the real money to be made by OpenAI—will be when they don’t.

Feature image credit: Marcin Golba/NurPhoto/Getty Images

By

Michael Serazio is a professor of communication at Boston College and the author, most recently, of The Authenticity Industries: Keeping it ‘Real’ in Media, Culture, and Politics.